Structured Output, Function Calling, and MCP

0. Introduction

This article will use the topic "How's the weather" to explain the three concepts of Structured Output, Function Calling, and MCP, demonstrating examples through qwen/qwen3-4b-2507 deployed locally on LM Studio.

1. Function Calling

1.1. Tool

1.1.1 Why

Traditional large models all behave in conversational form, one question one answer, can only chat, unable to interact with the real world. Taking the most common "How's the weather today" as an example, AI definitely cannot give or directly fabricate an answer.

So how to solve this problem? As follows:

- User sends message "I need to commute to and from work in Chengdu tomorrow, how's the weather?"

- Before sending the request to the LLM, the backend parses the message and finds keywords like "tomorrow", "weather", "Chengdu"

- Call the pre-set method for this situation, such as

Weather w = weatherService.getWeather("Chengdu", DateUtil.tomorrow());to get weather information - Then insert the weather information into the user message, i.e. "Chengdu today has a high of 18°C, low of 12°C, light rain, 70% precipitation probability. I need to commute to and from work in Chengdu tomorrow, how's the weather?"

- At this point, AI won't fabricate or be unable to answer; it will respond based on the information inserted by the backend

The problems with the above process are obvious:

- Keyword parsing is very fragile: if the user sends a message changed to "I need to commute to and from work in Chengdu tomorrow, will it rain?" Then obviously the parsing fails, missing this request that needs weather information. For example "...another gloomy weather day killing me" Parsing succeeded, but it's useless, adding useless weather information to the request, polluting the context, wasting AI call costs.

- Requires preset scenarios: the backend needs to consider many scenarios and establish corresponding parsing rules to provide external information sources.

- Not controlled by the model, unable to interact with the real world: it's the backend that uses the tools, the whole performance is still a chatbox, the large language model cannot actively call, meaning when the model wants to get weather information it cannot actively obtain it.

1.1.2 What

From the example above, it can be seen that LLM cannot autonomously obtain information, only passively receive it in user requests. Function Calling gives LLM a capability to interact with the outside world, through a specified format, letting LLM tell the backend what tool to call, and returning the result into the context, thereby obtaining external information or manipulating external data.

Conceptually, Function Calling has three key points:

- Tool definition

tools: Tell LLM: tool name, tool description, parameter JSON Schema

"tools": [

{

"type": "function",

"function": {

"name": "get_weather",

"description": "Get weather information for specified city. Use this tool when users ask about weather, precipitation, temperature, etc.",

"parameters": {

"type": "object",

"properties": {

"location": {

"type": "string",

"description": "City name, e.g.: Chengdu, Beijing"

},

"extensions": {

"type": "string",

"enum": [

"base",

"all"

],

"description": "Weather type:\n - base: Returns current weather, suitable for querying \"now\", \"current\", \"real-time\" weather\n - all: Returns forecast weather (next 3-4 days), suitable for querying \"tomorrow\", \"day after tomorrow\", \"next few days\" weather"

}

},

"required": [

"location",

"extensions"

]

}

}

}

]

- Tool calling

tool_calls: When the model thinks it needs to execute a tool, it generates anAIMessagewith atool_callsparameter, containing theid,name,argumentsof this tool call, equivalent to "Get future weather for Chengdu"

"tool_calls": [

{

"type": "function",

"id": "606046057",

"function": {

"name": "get_weather",

"arguments": "{\"location\":\"Chengdu\",\"extensions\":\"all\"}"

}

}

]

- Tool result message

ToolExecutionResultMessage: Backend receives LLM's message, findstool_calls, executes the method according to the tool name and parameters inside, and wraps the result into a message with"role": "tool", "id": "corresponding tool_call id", putting it into message history for the model to see.

That is, Function Calling gives LLM the capability to actively obtain information and interact in a standardized way.

1.1.3 How

The process of Function Calling is simple.

Example:

Request Body:

{

"model": "qwen3-4b-2507",

"tools": [

{

"type": "function",

"function": {

"name": "get_weather",

"description": "Get weather information for specified city. Use this tool when users ask about weather, precipitation, temperature, etc.",

"parameters": {

"type": "object",

"properties": {

"location": {

"type": "string",

"description": "City name, e.g.: Chengdu, Beijing"

},

"extensions": {

"type": "string",

"enum": [

"base",

"all"

],

"description": "Weather type:\n - base: Returns current weather, suitable for querying \"now\", \"current\", \"real-time\" weather\n - all: Returns forecast weather (next 3-4 days), suitable for querying \"tomorrow\", \"day after tomorrow\", \"next few days\" weather"

}

},

"required": [

"location",

"extensions"

]

}

}

}

],

"messages": [

{

"role": "user",

"content": "I need to commute to and from work in Chengdu tomorrow, will it rain?"

}

]

}

Response Body:

{

"id": "chatcmpl-g23i230saulvl49aru2okl",

"object": "chat.completion",

"created": 1763727823,

"model": "qwen/qwen3-4b-2507",

"choices": [

{

"index": 0,

"message": {

"role": "assistant",

"content": "",

"tool_calls": [

{

"type": "function",

"id": "606046057",

"function": {

"name": "get_weather",

"arguments": "{\"location\":\"Chengdu\",\"extensions\":\"all\"}"

}

}

]

},

"logprobs": null,

"finish_reason": "tool_calls"

}

],

"usage": {

"prompt_tokens": 275,

"completion_tokens": 26,

"total_tokens": 301

},

"stats": {},

"system_fingerprint": "qwen/qwen3-4b-2507"

}

In the response body above, you can see the tool_call with id 606046057, after the backend reads it, it calls the tool, gets weather information, then adds it to message history, and calls LLM again.

Here we use Amap Weather Query API.

Request Body:

{

"model": "qwen/qwen3-4b-2507",

"messages": [

{

"role": "user",

"content": "I need to commute to and from work in Chengdu tomorrow, how's the weather?"

},

{

"content": "",

"role": "assistant",

"tool_calls": [

{

"id": "606046057",

"function": {

"arguments": "{\"location\":\"Chengdu\",\"extensions\":\"all\"}",

"name": "get_weather"

},

"type": "function"

}

]

},

{

"role": "tool",

"tool_call_id": "606046057",

"name": "get_weather",

"content": "{\"status\": \"1\", \"count\": \"1\", \"info\": \"OK\", \"infoc... <Truncated in logs> ... \"nighttemp_float\": \"8.0\"}]}], \"_city_name\": \"Chengdu\"}"

}

]

}

Note: The added

ToolExecutionResultMessagehas atool_call_idparameter that needs to correspond to theidintool_callsinAIMessage, used to match the call and result.

2. Structured Output

2.1 Introduction

Through the tool calling in Section 1, the problem of LLM being unable to obtain external information is solved, such as

Obviously, LLM can only output a piece of natural language; text is hard to use and can only be used in text boxes. If we can make LLM output standardized data, wouldn't there be more use cases?

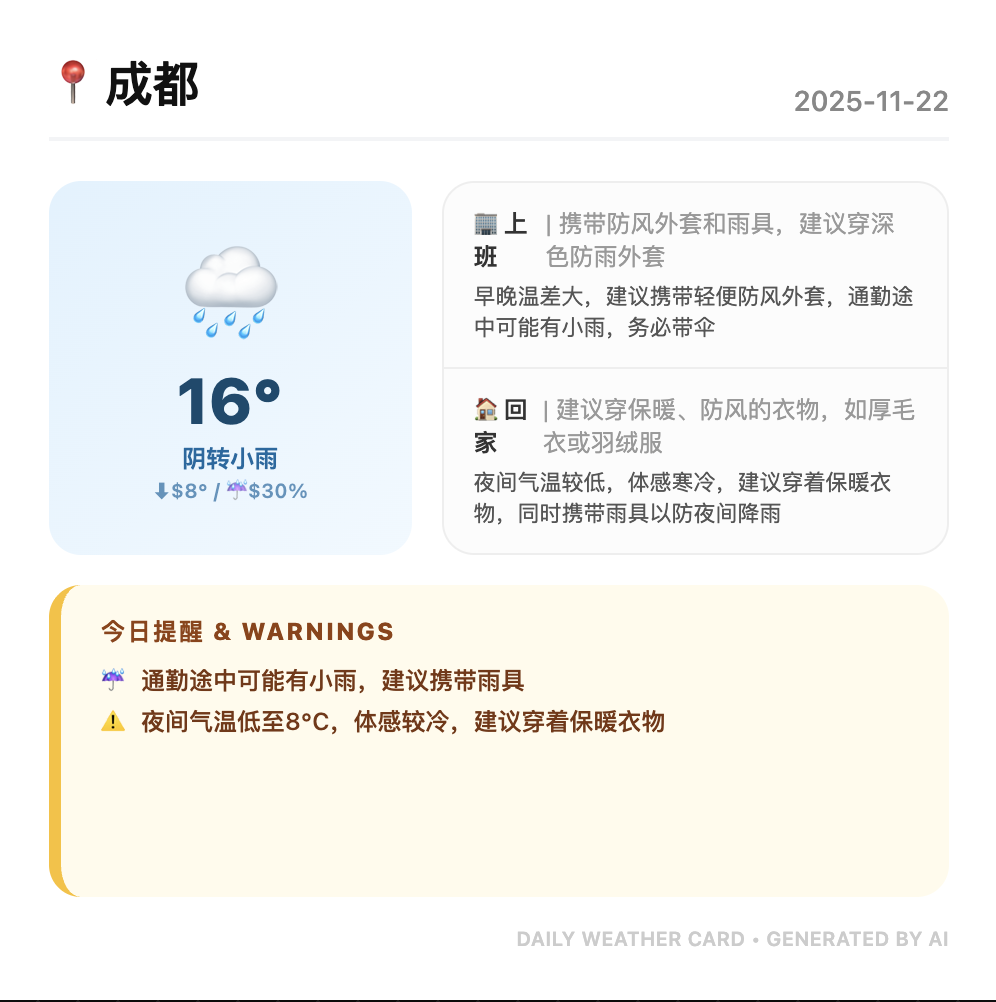

Taking the most common "Weather Card" as an example, if LLM outputs standardized, structured messages, then the card content can be completely produced by LLM, with very personalized content based on memory of the user, such as:

Screenshot 2025-11-21 23.43.24.png|500

Let LLM produce structured content, frontend can directly render the weather card, the JSON data corresponding to the above card is as follows:

{

"location": "Chengdu",

"date": "2025-11-22",

"weather": {

"summary": "Overcast turning to Light Rain",

"temp_high": 16,

"temp_low": 8,

"condition": "Overcast turning to Light Rain",

"rain_probability": 0.3

},

"commute_advice": {

"go_to_work": {

"need_umbrella": true,

"suggest_clothing": "Carry windproof jacket and rain gear, suggest wearing dark rainproof jacket",

"text": "Large temperature difference between morning and evening, suggest carrying a light windproof jacket, may have light rain during commute, be sure to bring an umbrella"

},

"back_home": {

"need_umbrella": true,

"suggest_clothing": "Suggest wearing warm, windproof clothing, such as thick sweater or down jacket",

"text": "Night temperature is lower, feels cold, suggest wearing warm clothing, also carry rain gear for night rainfall"

}

},

"alerts": [

{

"level": "warning",

"type": "rain",

"message": "May have light rain during commute, suggest carrying rain gear"

},

{

"level": "warning",

"type": "cold",

"message": "Night temperature drops to 8°C, feels relatively cold, suggest wearing warm clothing"

}

]

}

It seems that directly constraining the model in the prompt to reply this way also works. Yes, current LLMs have strong instruction following capabilities; the above JSON was constrained directly in the system prompt without using Structured Output.

System Prompt

You are a "Commute Weather Card Generator".

Input will contain:

- user_profile: User profile

- commute: Commute information

- weather: Real weather data

Please generate a commute weather card JSON based on this information, fields are:

{

"location": string,

"date": string, // YYYY-MM-DD

"weather": {

"summary": string,

"temp_high": number,

"temp_low": number,

"condition": string,

"rain_probability": number // 0~1

},

"commute_advice": {

"go_to_work": {

"need_umbrella": boolean,

"suggest_clothing": string,

"text": string

},

"back_home": {

"need_umbrella": boolean,

"suggest_clothing": string,

"text": string

}

},

"alerts": [

{

"level": "info" | "warning" | "danger",

"type": "rain" | "cold" | "heat" | "wind",

"message": string

}

]

}

Requirements:

1. Only output one JSON object, do not output any explanatory text or Markdown.

2. Field names must match exactly with above.

But the biggest problem is unreliability; need to validate, parse, retry output, very likely to suddenly get something like "Reasons are as follows:", success rate cannot be guaranteed, and with more context, it easily gets confused.

Structured Output guarantees the reliability of output structure, using JSON Schema to standardize the return structure.

2.2 Usage

To use Structured Output to output JSON, you need to include JSON Schema in the request; if the above case used Structured Output, its JSON Schema would be:

{

"type": "object",

"properties": {

"location": { "type": "string" },

"date": { "type": "string", "format": "date" },

"weather": {

"type": "object",

"properties": {

"summary": { "type": "string" },

"temp_high": { "type": "number" },

"temp_low": { "type": "number" },

"condition": { "type": "string" },

"rain_probability": { "type": "number" }

},

"required": ["summary", "temp_high", "temp_low", "condition"]

},

"commute_advice": {

"type": "object",

"properties": {

"go_to_work": {

"type": "object",

"properties": {

"need_umbrella": { "type": "boolean" },

"suggest_clothing": { "type": "string" },

"text": { "type": "string" }

},

"required": ["need_umbrella", "text"]

},

"back_home": {

"type": "object",

"properties": {

"need_umbrella": { "type": "boolean" },

"suggest_clothing": { "type": "string" },

"text": { "type": "string" }

},

"required": ["need_umbrella", "text"]

}

},

"required": ["go_to_work", "back_home"]

},

"alerts": {

"type": "array",

"items": {

"type": "object",

"properties": {

"level": { "type": "string", "enum": ["info", "warning", "danger"] },

"type": { "type": "string", "enum": ["rain", "cold", "heat", "wind"] },

"message": { "type": "string" }

},

"required": ["level", "type", "message"]

}

}

},

"required": ["location", "date", "weather", "commute_advice"]

}

Add JSON Schema constraints in the request body:

{

"model": "qwen3-4b-2507",

"messages": [

{

"role": "system",

"content": "You are a commute weather card generator. Please generate a commute weather card JSON based on input."

},

{

"role": "user",

"content": "Target user profile:\n- Not very cold-resistant, feels cold hands and feet when temperature is below 20°C.\n- Schedule is alternating weeks, this week is a work week, need normal commute.\n- Usually leaves for work at 10:00 on workdays, gets off work at 22:00, commute method is subway + walking.\n\nToday's commute weather card to generate:\n- City: Chengdu\n- Date: 2025-11-22\n\nBelow is the already queried weather data (do not query weather again, only use this data):\n\n{\n \"date\": \"2025-11-22\",\n \"location\": \"Chengdu\",\n \"week\": \"6\",\n \"dayweather\": \"Overcast\",\n \"nightweather\": \"Light Rain\",\n \"daytemp\": \"16\",\n \"nighttemp\": \"8\",\n \"daywind\": \"North\",\n \"nightwind\": \"North\",\n \"daypower\": \"1-3\",\n \"nightpower\": \"1-3\",\n \"daytemp_float\": \"16.0\",\n \"nighttemp_float\": \"8.0\"\n}"

}

],

"response_format": {

"type": "json_schema",

"json_schema": {

"name": "WeatherAdviceResponse",

"schema": 「Above JSON Schema」,

"strict": true

}

}

}

responseformat actually has three types One is

text, the default form, returns text One is `jsonobject, guarantees returning valid JSON One isjson_schema` guarantees returning according to provided JSON Schema Different models have different support capabilities; for example, OpenAI models basically all support it

Then you can get the reply in the corresponding format.

3. MCP

3.0 Introduction

In Section 1 Function Calling, the limitations of traditional methods were mentioned:

- Requires backend to preset scenarios in advance: need to consider weather scenarios in advance, implement parsing and acquisition to support

- Scenario parsing is unreliable: missing or misjudging scenarios, missing external information or adding useless information

And now, LLM already has two capabilities:

- Function Calling: Can actively call provided tools to interact with the external world

- Structured Output: Can output in given JSON Schema format, this is reflected in Function Calling

Actually, OpenAI introduced the concept of

Structured Outputs, unifyingresponse_format: { type: "json_schema", json_schema: {...} }andtools: [{"type": "function", "function": {}, "strict": true}], allowing models to be fully constrained by JSON Schema output.

It seems very powerful already; we provide get_weather, search_news, write_memory, read_memory tools, and we can let LLM record memories of us, producing personalized, structured content.

But traditional method limitations still have one point not resolved, which is "requires backend to preset scenarios in advance", requiring programmers to think of this scenario and specifically write code to implement some tools.

- When users want LLM to interact with third-party services, such as searching for a repo on GitHub, reading an article on Notion, posting a note on Xiaohongshu, they need to write these tool wrappers in advance and provide them to LLM.

- Wanting to use these same tools in another AI application requires copying the code again, perhaps with some adjustments.

- Wanting to share these tools but not expose implementation details is somewhat difficult.

MCP solves these problems, separating providing tools from using tools, unifying tool definitions and descriptions, for models to interact with the external world.

3.1 What & Why

MCP is an open protocol, based on JSON-RPC 2.0, exposing three types of capabilities: Tools, Resources, Prompts; undoubtedly the most common is Tools.

Why is MCP needed?

Function Calling solved how models tell programs "I want to call a tool", while MCP solves sharing, discovering, managing, reusing tools.

There are three concepts: MCP Server: Provider, implements tools, and exposes tool definitions outward according to MCP protocol definitions. MCP Client: Consumer, discovers tools, and calls tools according to MCP protocol definitions. Host: Application, converts the tool list obtained by MCP Client into tools, receives tool_calls and calls MCP Server through MCP Client to get ToolExecutionResultMessage.

Benefits:

- Easy to integrate, no need to add Tools logic inside the program, just add MCP Server directly, such as adding MCP in Cursor or Claude Code.

- Hides implementation details, what is provided externally is just a black box, receives requests, returns results, internal details not exposed.

- Dynamic management capability, MCP Client can dynamically discover tools based on tools/list, and can also manually disable certain tools.

3.2 MCP Operation Flow

Flowchart is as follows:

Case Study: Amap MCP: